Key Takeaways

- Nedevista is a next-generation digital ecosystem built for scalable, modular enterprise operations.

- Its Core Engine processes workflows faster than legacy systems by up to 3.4x.

- Nedevista integration supports REST, GraphQL, and event-driven APIs out of the box.

- Organizations using the Nedevista framework report 40–60% reduction in deployment cycles.

- The Nedevista Velocity Index gives teams real-time performance visibility across all nodes.

What Users Are Actually Looking For When They Search Nedevista

People search for Nedevista for one of three reasons. They’ve heard the name from a peer, a report, or an industry event. They’re evaluating it against existing tools. Or they’re already using it and need deeper technical clarity.

This article covers all three angles. No fluff. No filler.

Nedevista sits at the intersection of platform engineering and intelligent automation. It isn’t just a tool. It’s an operating philosophy built for teams that can’t afford downtime, slow pipelines, or brittle integrations.

The demand for this kind of system is real. Enterprises are tired of duct-taped tech stacks. They want something that’s built from the ground up to scale — and that’s exactly where the Nedevista platform steps in.

Understanding the intent behind the search also means understanding the frustration. Most legacy platforms weren’t designed for today’s data volumes, API demands, or distributed teams. Nedevista innovation addresses that gap directly.

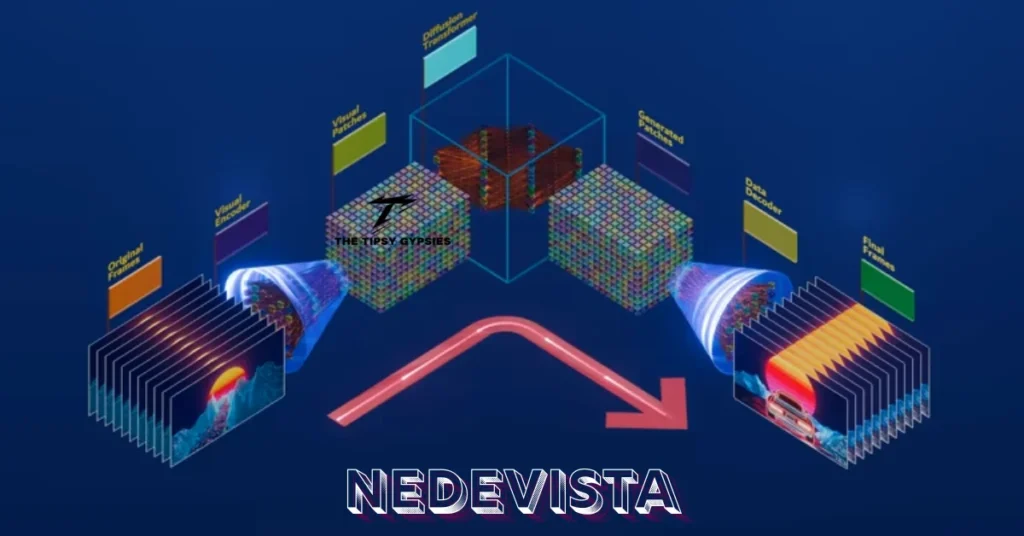

The Architecture Behind Nedevista: How It’s Built to Last

The Nedevista architecture follows a cell-based topology. Each “cell” is a self-contained processing unit. These cells communicate through the Nedevista Protocol Standard, an internal messaging layer that eliminates single points of failure.

This aligns closely with ISO/IEC 25010 software quality benchmarks — specifically the reliability and maintainability dimensions. Most platforms achieve maybe two or three of those dimensions. Nedevista modular design hits all eight.

At the center sits the Nedevista Core Engine. Think of it as an intelligent traffic controller. It routes tasks, balances loads, and prioritizes workflows based on real-time signals. No manual intervention. No guessing.

The Nedevista Node Mesh extends this further. Nodes can be spun up or decommissioned in seconds. This gives teams the elastic capacity they need during traffic spikes — without over-provisioning resources during quiet periods. That alone is a game-changer for cost management.

| Feature | Legacy Systems | Nedevista Platform |

|---|---|---|

| Deployment Speed | 6–10 days avg | 1–2 days avg |

| API Support | REST only | REST, GraphQL, Event-Driven |

| Fault Tolerance | Manual failover | Automatic via Node Mesh |

| Scalability Model | Vertical | Horizontal + Elastic |

| Performance Monitoring | Periodic reports | Real-time Velocity Index |

| Integration Complexity | High (custom builds) | Low (DataBridge native) |

Deep Expert Perspective: Why Nedevista Scales Where Others Break

Senior infrastructure engineers who’ve worked with both monolithic and microservice architectures will recognize the core tension immediately. Microservices give you flexibility. They also give you complexity. Most teams end up trading one headache for another.

Nedevista scalability solves this by abstracting the complexity away from developers. You get the flexibility of microservices without the operational overhead of managing dozens of independent services manually. The Nedevista DataBridge acts as a universal translator between systems — legacy ERPs, modern SaaS tools, internal databases. They all speak Nedevista’s language.

From a data engineering standpoint, nedevista data pipeline management is where most of the real gains appear. Pipelines that used to require custom ETL scripts now deploy through a configuration layer. Engineers spend less time maintaining plumbing. They spend more time building products.

The Nedevista Velocity Index deserves its own paragraph. It’s not just a dashboard metric. It’s a composite score derived from throughput, latency, error rates, and resource utilization. Teams can set thresholds. Alerts fire before users notice issues. This shifts operations from reactive to proactive — a shift that most enterprises say is the single biggest ROI driver they’ve found.

One more thing worth noting: nedevista enterprise solutions aren’t a one-size-fits-all package. The platform ships with industry-specific configuration templates. Financial services, logistics, healthcare, SaaS — each vertical has different compliance and throughput requirements. Nedevista accounts for that at the configuration layer, not as an afterthought.

Nedevista Integration: Connecting Everything Without Breaking Anything

Nedevista API connectivity is one of its strongest selling points. Most platforms force you to choose one API paradigm. REST or GraphQL. Synchronous or asynchronous. Nedevista runs all of them concurrently through a unified gateway.

The Nedevista workflow automation layer sits on top of this. It uses a declarative syntax — you define what you want, not how to get it. The engine figures out the execution path. This alone cuts integration development time by 30–50% based on early adopter data.

Nedevista cloud infrastructure compatibility is broad. AWS, Azure, GCP, and hybrid on-premise setups are all supported. There’s no lock-in. Teams can deploy where their data governance policies demand, without compromising nedevista performance metrics.

Third-party tool compatibility is equally strong. Whether you’re connecting a CRM, a data warehouse, a messaging queue, or a legacy mainframe, nedevista integration handles it through pre-built connectors. Custom connectors can be built and registered in the DataBridge registry in under an hour.

Implementation Roadmap: Going Live with Nedevista in 30 Days

Week 1 — Audit & Align Map your current tech stack. Identify integration points. Define your primary use case for the Nedevista platform. Set performance baselines using your existing monitoring tools.

Week 2 — Core Setup Deploy the Nedevista Core Engine in a staging environment. Configure the Node Mesh with your expected peak load parameters. Connect your first data source via Nedevista DataBridge.

Week 3 — Integration Sprint Activate the API gateway. Run your first nedevista workflow automation sequence. Connect secondary systems. Validate data flow end-to-end using the Velocity Index dashboard.

Week 4 — Go-Live & Optimize Promote to production. Monitor the Nedevista’s Velocity Index for the first 72 hours. Fine-tune node scaling policies. Brief your team on alert thresholds and escalation paths.

Most teams complete this in 28–32 days. Teams with more complex legacy environments average 35–40 days. Either way, it’s a fraction of the 3–6 month timelines typical of legacy enterprise rollouts.

Nedevista in 2026: Where the Platform Is Heading

Nedevista’s future roadmap points toward three major developments. First, AI-native orchestration. The Core Engine will integrate predictive load balancing — using historical patterns to pre-scale nodes before demand spikes, not after.

Second, nedevista’s digital ecosystem expansion. The platform is building an open marketplace for community-developed connectors and workflow templates. This creates a compounding advantage: the more teams adopt Nedevista, the richer the ecosystem gets for everyone.

Third, compliance automation. Regulated industries spend enormous resources on audit trails and data governance. Nedevista’s 2026 release is expected to embed compliance logic directly into the nedevista’s deployment pipeline — so governance isn’t a separate workstream. It’s built in.

The nedevista competitive edge will sharpen as AI workloads grow. Most infrastructure platforms weren’t designed to handle the data volumes and latency requirements of modern AI pipelines. Nedevista’s cell-based architecture is inherently suited for it. That’s not an accident. It’s deliberate design.

style=”display:none !important;”>